Least Squares Regression Line Calculator

The gold standard for linear modeling. Minimize residuals and maximize predictive accuracy with our professional-grade OLS best-fit engine.

Least Squares Regression Line

Compute the least squares regression line instantly. Enter your data to find the OLS best-fit line equation y = mx + b with comprehensive math solutions.

Enter your data points

| # | X | Y |

|---|

Results

Regression Equation

Slope (m)

Y-Intercept (b)

R² (Coefficient of Determination)

Correlation (r)

Predicted Y

Intermediate Calculations

| Symbol | Compute the least squares regression line instantly. Enter your data to find the OLS best-fit line equation y = mx + b with comprehensive math solutions. | Value |

|---|

Statistics

| Statistic | Value |

|---|---|

| Standard Error | |

| Sample Size (n) | |

| Degrees of Freedom |

Chart

Step-by-Step Solution

How to Use This Least Squares Regression Line Calculator

Best-Fit Line

Computes the OLS regression line y = mx + b that minimizes squared residuals.

Enter X & Y Data

Input paired data points as comma-separated values or paste from a spreadsheet.

Step-by-Step Output

Get slope, intercept, R², intermediate sums, and residual diagnostics.

Best For

Linear trend analysis, prediction, and introductory statistics coursework.

Check the residual plot for patterns — a clear pattern suggests a linear model may not be the best fit.

What Is the Least Squares Regression Line?

📊 The least squares regression line (LSRL) is the straight line that minimizes the sum of the squared vertical distances between observed data points and the line. Mathematically, if we have n data points (x₁, y₁), (x₂, y₂), …, (xₙ, yₙ), the LSRL is the line ŷ = mx + b that minimizes the quantity Σ(yᵢ − ŷᵢ)², where ŷᵢ = mxᵢ + b are the predicted values.

🏆 This criterion is called the least squares criterion, and the resulting line is also known as the Ordinary Least Squares (OLS) line or the line of best fit. The method of least squares has a rich history dating back to Adrien-Marie Legendre in 1805, who published it as a way to determine the orbits of comets, and Carl Friedrich Gauss, who claimed he had been using it since 1795 and later developed it further in his theory of errors.

⚠️ The name "least squares" comes directly from the mathematical objective: among all possible lines, we choose the one that makes the sum of squared residuals as small as possible — the least sum of squares. Squaring the residuals (rather than taking absolute values) ensures that positive and negative errors do not cancel each other out, and it also gives more weight to larger deviations, which is statistically desirable.

📊 Today, the least squares regression line is the most widely used method for fitting a straight line through data points in statistics, economics, the natural sciences, social sciences, and engineering. It serves as the foundation for more advanced techniques including multiple regression, generalized linear models, and machine learning algorithms. Every introductory statistics course teaches the LSRL as the cornerstone of regression analysis.

Least Squares Regression Line Formula Calculator Explained

📐 The least squares regression line is defined by the equation ŷ = mx + b, where m is the slope representing the rate of change in Y for each unit increase in X, and b is the y-intercept representing the predicted value of Y when X equals zero. These two parameters completely determine the line and are estimated from the sample data using the method of least squares.

📐 The slope and intercept are determined by solving the normal equations, which are derived by calculus — setting the partial derivatives of the sum of squared residuals with respect to m and b equal to zero. This yields a system of two linear equations: Σy = nb + mΣx and Σxy = bΣx + mΣx².

📊 Solving these simultaneously gives the closed-form formulas m = SSxy / SSxx and b = ȳ − m·x̄, where SSxy = Σ(xᵢ − x̄)(yᵢ − ȳ) is the sum of cross-products (also called the corrected covariance sum), SSxx = Σ(xᵢ − x̄)² is the sum of squared deviations of X from its mean, and x̄ and ȳ are the sample means of X and Y respectively.

📐 An important property is that the regression line always passes through the point (x̄, ȳ) — the centroid of the data. The coefficient of determination R² = 1 − SSres/SStot measures the proportion of variance in Y explained by the regression model, where SSres = Σ(yᵢ − ŷᵢ)² is the residual sum of squares and SStot = Σ(yᵢ − ȳ)² is the total sum of squares. R² ranges from 0 to 1, with values closer to 1 indicating a better fit.

🎯 The least squares method minimizes the sum of squared residuals because squaring accomplishes two critical goals: it makes all errors positive so they cannot cancel each other out, and it penalizes larger deviations more heavily than smaller ones, which aligns with the statistical goal of avoiding large prediction errors.

📊 Alternative approaches like minimizing the sum of absolute deviations (LAD regression) exist but lack the convenient mathematical properties of least squares, such as closed-form solutions, differentiability, and direct connections to maximum likelihood estimation when errors are normally distributed. The Gauss-Markov theorem further justifies the LSRL by proving that among all linear unbiased estimators, the OLS estimator has the smallest variance.

| Component | Symbol | Description |

|---|---|---|

| Slope | m | Rate of change in Y per unit X |

| Y-Intercept | b | Predicted Y when X = 0 |

| Sum of Products | SSxy | Σ(xi − x̄)(yi − ȳ) |

| Sum of Squares | SSxx | Σ(xi − x̄)² |

| Mean of X | x̄ | Σxi / n |

| Mean of Y | ȳ | Σyi / n |

| R² | R² | Proportion of variance explained |

How to Calculate Least Squares Regression Line

📐

Manual Calculation

Calculating the least squares regression line by hand follows a systematic process. We will walk through each step using the five data points (1, 2), (2, 4), (3, 5), (4, 4), (5, 7) as a worked example:

- Compute the means: x̄ = (1 + 2 + 3 + 4 + 5) / 5 = 15/5 = 3 and ȳ = (2 + 4 + 5 + 4 + 7) / 5 = 22/5 = 4.4. The point (x̄, ȳ) = (3, 4.4) is the centroid of the data, and the regression line will always pass through this point.

- Calculate deviations from the mean: For each data point, find (xᵢ − x̄) and (yᵢ − ȳ). The x-deviations are −2, −1, 0, 1, 2. The y-deviations are −2.4, −0.4, 0.6, −0.4, 2.6. These deviations measure how far each observation lies from the center of the dataset.

- Compute cross-products and squared deviations: Multiply (xᵢ − x̄)(yᵢ − ȳ) for each point to get 4.8, 0.4, 0, −0.4, 5.2. Sum them: SSxy = 10.0. Square each (xᵢ − x̄) to get 4, 1, 0, 1, 4. Sum them: SSxx = 10.0. These sums are the building blocks of the slope formula.

- Find the slope: m = SSxy / SSxx = 10.0 / 10.0 = 1.0. This means Y increases by 1 unit for each unit increase in X. A slope of 1 indicates a direct one-to-one relationship between X and Y on average.

- Find the intercept: b = ȳ − m·x̄ = 4.4 − 1.0 × 3 = 1.4. This is the predicted Y value when X = 0. In this example, the intercept of 1.4 may or may not have a meaningful real-world interpretation depending on the context.

- Write the equation: ŷ = 1.0x + 1.4. Verify by plugging in x-values: ŷ(1) = 2.4, ŷ

📊 (2) = 3.4, ŷ

📊 (3) = 4.4, ŷ

📊 (4) = 5.4, ŷ

📊 (5) = 6.4. Compare these predicted values with the actual Y values to see the residuals.

- Compute R²: SStot = Σ(yᵢ − ȳ)² = 5.76 + 0.16 + 0.36 + 0.16 + 6.76 = 13.2. SSres = Σ(yᵢ − ŷᵢ)² = 0.16 + 0.36 + 0.36 + 1.96 + 0.36 = 3.2. R² = 1 − 3.2/13.2 ≈ 0.758. The model explains about 75.8% of the variance in Y, which is a moderately good fit.

Using Our Tool

Our calculator performs all of these steps automatically. Simply: (1) enter your data pairs into the table above,

🧮 (2) click the Calculate button, and

📐 (3) review the complete results including the equation ŷ = 1.0x + 1.4, slope, intercept, R², and a full step-by-step breakdown showing every intermediate calculation. This eliminates manual computation errors and saves significant time, especially with larger datasets where hand calculations become impractical.

Residual Analysis and Diagnostics

📊 A residual is the difference between an observed Y value and the value predicted by the regression line: eᵢ = yᵢ − ŷᵢ. Positive residuals mean the model underpredicted (the actual Y is above the line), and negative residuals mean the model overpredicted (the actual Y is below the line). Residuals are the key diagnostic tool for evaluating whether the least squares regression line is an appropriate model for your data.

📊 A residual plot — which graphs residuals on the vertical axis against predicted values (or X values) on the horizontal axis — should show a random scatter of points centered around zero with no discernible pattern. If the residual plot shows a curved pattern, the relationship is non-linear and a polynomial or transformation may be needed.

📊 If the spread of residuals increases or decreases across the plot, this indicates heteroscedasticity (non-constant variance), which violates a core OLS assumption and makes standard errors unreliable. If residuals cluster on one side of zero in certain X regions, the model may be systematically biased there. Checking homoscedasticity (constant variance of residuals) is essential for reliable hypothesis tests and confidence intervals.

🏆 You should also examine the normality of residuals — while exact normality is not required for the LSRL to be the best linear unbiased estimator (BLUE), it is needed for valid p-values and confidence intervals in small samples. Use a histogram or normal probability plot of residuals to assess normality visually, or run a formal test such as the Jarque-Bera test. For a comprehensive automated check of all regression assumptions, use our Regression Assumptions Checker.

Least Squares Regression Line Prediction Calculator

📐 Once you have the regression equation ŷ = mx + b, you can use it to predict Y values for any given X value using the formula ŷ₀ = mx₀ + b. This is one of the most powerful applications of regression analysis — the ability to forecast outcomes for new, unobserved values of the predictor variable. However, it is critical to distinguish between interpolation and extrapolation.

📊 Interpolation means predicting within the range of your observed X values — for example, if your data spans X = 2 to X = 10, predicting at X = 5 is interpolation and is generally reliable because the linear assumption has been validated within that range.

📊 Extrapolation means predicting outside that range — predicting at X = 15 or X = 0 when your data starts at X = 2 is extrapolation, which can be highly unreliable because the linear relationship may not hold beyond the observed range. The relationship could curve, level off, or reverse direction outside the data range, and you would have no way to detect this from the data alone.

✅ Always report predictions with appropriate caution and include the R² value so that users understand the uncertainty involved. For the most reliable results, limit predictions to the observed range of X and use prediction intervals to quantify uncertainty.

- 1

1. Stay within the observed X range for the most reliable predictions — interpolated values are far more trustworthy than extrapolated ones.

- 2

2. Always report R² alongside predictions so that stakeholders understand how much of the variance the model actually explains.

- 3

3. Consider the prediction interval, not just the point estimate — the interval quantifies uncertainty and widens as you move away from the mean of X.

- 4

4. Remember that the regression line predicts the average Y for a given X, not a specific individual outcome — there is natural variability around the line.

How to Find Least Squares Regression Line on Calculator

TI-84 Plus

- Press STAT → select 1:Edit → enter X values in list L1 and Y values in list L2 (press ENTER after each entry)

- Press STAT → right-arrow to CALC menu → select 4:LinReg(ax+b)

- To store the equation for graphing, press VARS → Y-VARS → 1:Function → 1:Y1 before pressing ENTER on LinReg

- Press ENTER to calculate — the screen displays a (slope), b (intercept), r², and r

- The equation is displayed as y = ax + b where a = slope and b = intercept (note: TI uses a for slope, not m)

- To graph, press Y= to verify the equation is stored in Y1, then press GRAPH. Use ZOOM → 9:ZoomStat for automatic window fitting

- For predictions, press 2nd → CALC → Value, enter an X value, and read the corresponding ŷ from the curve

TI-83 Plus

- Press STAT → select 1:Edit → enter X values in L1 and Y values in L2 (press ENTER after each entry)

- Press STAT → right-arrow to CALC menu → scroll to 4:LinReg(ax+b) → press ENTER

- To store the equation, press VARS → Y-VARS → 1:Function → 1:Y1 before pressing ENTER on LinReg

- Press ENTER to run the calculation — the screen displays a (slope), b (intercept), r², and r

- The equation format is y = ax + b. If r² and r are not shown, press 2nd → CATALOG → find DiagnosticOn → press ENTER twice to enable

- To graph, press Y= to confirm the equation is stored, then GRAPH. Press ZOOM → 9:ZoomStat for automatic window fitting

Casio fx-9860GII

- Press MENU → select STAT → enter X values in List 1 and Y values in List 2

- Press CALC (F2) → select REG → choose X for linear regression

- Press EXE to calculate — the screen displays a (slope), b (intercept), r, and r²

- The equation format is y = ax + b where a = slope and b = intercept

- To graph, press DRAW (F6) after calculation to see the scatter plot with the regression line overlaid

- For predictions, use the CALC → Value function after graphing to find ŷ for a given x

TI-Nspire

- Add a Lists & Spreadsheet page → enter X values in column A and Y values in column B

- Press Menu → Statistics → Stat Calculations → Linear Regression (mx + b)

- Select the X list and Y list, then press OK to calculate

- The results show m (slope), b (intercept), r², and r in a results table

- To graph, add a Data & Statistics page, add X and Y variables, then press Menu → Analyze → Regression → Show Linear (mx + b)

Our online calculator provides more detailed step-by-step breakdowns than any physical calculator, including all intermediate calculations (means, deviations, cross-products, squared deviations) and an interactive graph with the regression line and data points overlaid. It also uses the standard notation ŷ = mx + b consistently, whereas different calculator brands may use a for slope (TI-84/83, Casio) or other conventions.

When to Use Least Squares Regression

📐 Least squares regression is the appropriate tool when the relationship between your variables is approximately linear and you need to quantify that relationship, make predictions, or test hypotheses about the slope. Ideal use cases include analyzing trends over time, establishing calibration curves, quantifying dose-response relationships, modeling supply and demand, and predicting outcomes from input variables.

⚠️ The method is particularly powerful when you need interpretable coefficients — the slope directly tells you the expected change in Y per unit X — and when you need formal inference such as confidence intervals and hypothesis tests. However, the least squares method is not appropriate for every situation. Do not use it when the scatter plot shows obvious curvature (consider a quadratic regression or exponential regression instead), when outliers exert disproportionate influence on the line (use robust regression methods), when the variance of residuals changes across the range of X (use weighted least squares), or when you need to model binary or count outcomes (use logistic or Poisson regression respectively). Always inspect a scatter plot before choosing a regression model — a visual check can prevent you from fitting a line to data that is clearly curved or contains extreme outliers.

Linear trend analysis in business and economics

Quality control and process monitoring

Calibration curves in laboratory settings

Educational teaching of fundamental statistics

Any situation requiring the best-fit straight line

Frequently Asked Questions

What is the least squares regression line?

📊 The least squares regression line is the straight line ŷ = mx + b that minimizes the sum of squared residuals Σ(yᵢ − ŷᵢ)². It is the best-fit line through your data points in the sense that no other line produces a smaller sum of squared vertical distances between observed and predicted values. It is also called the OLS line or the line of best fit.

How do I calculate the LSRL by hand?

🧮 To calculate the least squares regression line manually, follow these steps:

- Compute the means x̄ and ȳ.

- For each point, calculate deviations (xᵢ − x̄) and (yᵢ − ȳ).

- Compute SSxy = Σ(xᵢ − x̄)(yᵢ − ȳ) and SSxx = Σ(xᵢ − x̄)².

- Find the slope m = SSxy / SSxx.

- Find the intercept b = ȳ − m·x̄.

- Write the equation ŷ = mx + b.

💻 Our calculator performs all of these steps automatically with detailed intermediate results.

What does R² tell me?

📐 R² (the coefficient of determination) measures the proportion of variance in Y explained by the regression model. It ranges from 0 to 1:

- R² = 1 — the model perfectly fits the data (all points lie on the line)

- R² = 0 — the model explains none of the variance (horizontal line at ȳ)

- R² > 0.7 — generally considered a good fit

- R² > 0.9 — an excellent fit

- R² < 0.5 — the linear model may be inappropriate

🤔 However, context matters — in some fields like social sciences, R² values of 0.3 may be acceptable.

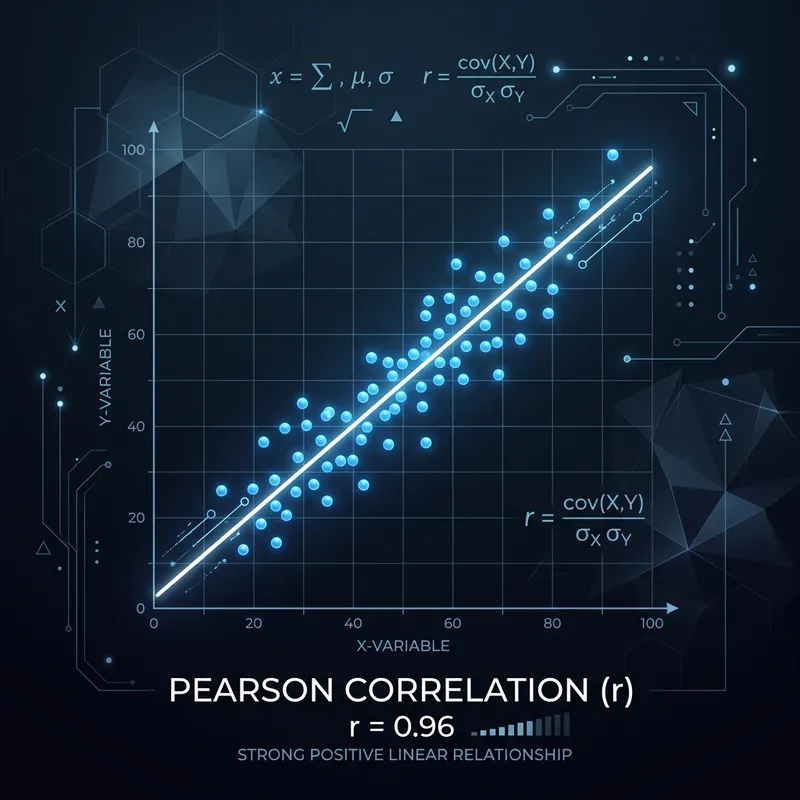

What's the difference between correlation and regression?

📐 Correlation measures the strength and direction of the linear relationship between two variables, producing a single number r between −1 and +1. Regression goes further by fitting a predictive equation ŷ = mx + b that allows you to estimate Y from X.

📐 Correlation is symmetric (r(X,Y) = r(Y,X)), while regression is not (regressing Y on X gives different results than regressing X on Y, unless r = 1). In fact, the slope m = r · (sᵧ/sₓ), where sᵧ and sₓ are the standard deviations of Y and X. Use our Pearson Correlation Calculator when you only need to measure association, and use this regression calculator when you need to predict Y from X.

When should I use quadratic instead of linear regression?

✅ Use quadratic regression instead of linear regression when your scatter plot shows a clear curve or U-shaped pattern. Key indicators include: a low R² from the linear model, a curved pattern in the residual plot, or domain knowledge suggesting a peak or valley in the relationship. For example, crop yield vs. fertilizer often shows diminishing returns (inverted U), making quadratic regression more appropriate than linear.

How many data points do I need?

📊 The mathematical minimum is 2 data points — just enough to define a line. However, for meaningful statistical results you should have at least 5 to 10 points.

📐 For reliable confidence intervals and hypothesis tests, aim for 20 or more. The more data you have, the narrower your confidence intervals will be and the more stable your slope and intercept estimates become. Very small samples (n = 2 or 3) produce R² = 1 by default and are essentially meaningless for inference.

What if my R² is very low?

📊 A low R² means the linear model explains little of the variance in Y. This could indicate several things:

- The relationship is non-linear — try a quadratic or exponential model instead

- There is high natural variability in Y that no model can capture

- Important predictor variables are missing — consider multiple regression with additional predictors

- The data contains outliers that are inflating the residual sum of squares

🔍 Always inspect your scatter plot and residual plot before drawing conclusions from R² alone.

Can I use this for time series data?

📊 You can use least squares regression for time series data, but with caution. The main risk is autocorrelation — consecutive residuals tend to be correlated in time series, violating the independence assumption. This can make your R² appear more significant than it really is and produce unreliable confidence intervals.

📊 Check the Durbin-Watson statistic to detect autocorrelation (values near 2 are good; near 0 or 4 indicate problems). For strongly autocorrelated data, consider ARIMA models or include lag terms. Our Regression Assumptions Checker tests for autocorrelation automatically.

What is the residual standard error?

📊 The residual standard error (also called the standard error of the estimate or root mean square error) measures the average distance that the observed values fall from the regression line.

📊 It is calculated as s = √(SSres / (n − 2)), where SSres is the residual sum of squares and n − 2 is the degrees of freedom. A smaller residual standard error indicates a tighter fit. Approximately 95% of the data points should fall within ±2s of the regression line if the residuals are normally distributed.

How do I interpret the slope?

📐 The slope m tells you the expected change in Y for a one-unit increase in X. For example, if m = 2.5, then Y is predicted to increase by 2.5 units each time X goes up by 1.

📐 A positive slope indicates an increasing trend, a negative slope indicates a decreasing trend, and a slope near zero suggests no linear relationship. Always consider the context: a slope of 0.01 in dollars might be trivial, but a slope of 0.01 in infection rates could be significant.

What's the difference between LSRL and OLS?

🔑 LSRL (Least Squares Regression Line) and OLS (Ordinary Least Squares) are essentially the same thing. LSRL refers specifically to the fitted line in simple linear regression (one predictor), while OLS is the more general method that also applies to multiple regression with several predictors. "Ordinary" distinguishes it from variants like Weighted Least Squares (WLS) or Generalized Least Squares (GLS). The underlying principle — minimizing Σ(yᵢ − ŷᵢ)² — is identical.

Can I predict outside my data range?

📊 Technically yes — you can plug any X value into ŷ = mx + b — but extrapolation is risky. The linear relationship observed within your data range may not hold outside it.

⚠️ For example, a linear trend in sales from 2010–2020 may not continue through 2030 if market conditions change. Always flag extrapolated predictions with a caution note and consider whether the linear assumption is reasonable for the range you are predicting into. Interpolation (predicting within the observed range) is far more reliable.

What if my data has outliers?

📊 Outliers can dramatically affect the least squares regression line because squaring residuals gives them disproportionate weight — a single extreme point can pull the line far from the bulk of the data. If you suspect outliers, first check whether they are data-entry errors and correct or remove them if so.

💻 If the outliers are genuine, consider using robust regression methods such as RANSAC, Theil-Sen estimation, or M-estimation, which are less sensitive to extreme values. You can also use our Grubbs' Test Calculator to formally test whether an extreme point is a statistical outlier.

How do I check regression assumptions?

🔍 Check each of the four OLS assumptions systematically:

- Linearity: Plot Y vs. X and residuals vs. predicted values — look for curves.

- Independence: Use the Durbin-Watson test — values near 2 indicate no autocorrelation.

- Homoscedasticity: Plot residuals vs. predicted values — the spread should be roughly constant (no funnel shape). Use the Breusch-Pagan test for a formal check.

- Normality: Create a histogram or Q-Q plot of residuals. Use the Jarque-Bera test for a formal check.

🔍 For an automated comprehensive check, use our Regression Assumptions Checker which tests all four assumptions at once.

What is multicollinearity?

📊 Multicollinearity occurs when two or more predictor variables are highly correlated with each other, making it difficult to separate their individual effects on Y.

📊 This is a problem in multiple regression (not simple linear regression with one predictor). It inflates standard errors of the coefficients, making them unstable and potentially insignificant even when the overall model fits well. Detect it with variance inflation factors (VIF > 5 indicates a problem) or a correlation matrix of predictors. Remedies include dropping one of the correlated predictors, combining them via PCA, or using ridge regression.

Is least squares the same as line of best fit?

🔑 In most contexts, yes — the least squares regression line is the most common definition of the "line of best fit" or "best fit line." However, technically, "best fit" depends on your criterion. Least squares minimizes the sum of squared vertical distances. Alternative criteria include minimizing absolute deviations (LAD/L1 regression) or minimizing perpendicular distances (total least squares / orthogonal regression). In statistics education and most software, "line of best fit" refers specifically to the least squares line unless otherwise stated.

What is the standard error of the slope?

📐 The standard error of the slope SE(m) measures the precision of the slope estimate. It is calculated as SE(m) = s / √SSxx, where s is the residual standard error and SSxx = Σ(xᵢ − x̄)².

📐 A smaller SE(m) means the slope estimate is more precise. It is used to construct confidence intervals and perform hypothesis tests for the slope. The t-statistic for testing whether the slope is significantly different from zero is t = m / SE(m), which follows a t-distribution with n − 2 degrees of freedom.

How do I calculate a confidence interval for the slope?

📐 A confidence interval for the slope m is:

m ± t* × SE(m)

where t* is the critical value from the t-distribution with n − 2 degrees of freedom at the desired confidence level, and SE(m) = s / √SSxx.📐 For a 95% confidence interval with n = 20 data points, df = 18 and t* ≈ 2.101. For example, if m = 2.5 and SE(m) = 0.4, the 95% CI is 2.5 ± 2.101 × 0.4 = (1.66, 3.34). If the interval does not contain zero, the slope is statistically significant at the 5% level.

What does a negative slope mean?

📐 A negative slope means that Y decreases as X increases — there is an inverse or negative linear relationship between the variables.

📐 For example, if the regression line for price vs. demand has a slope of −3, it means that for each $1 increase in price, demand is predicted to decrease by 3 units. A negative slope does not mean the model is bad — it simply indicates the direction of the relationship. The strength of the relationship is measured by |R²|, not the sign of the slope.

Can I use this calculator for homework?

🎯 Absolutely! This calculator is designed to be an educational tool that helps you learn statistics, not just get answers.

📐 It provides complete step-by-step solutions showing every intermediate calculation, so you can follow along, verify your manual work, and understand the mathematical process. Use it to check your homework answers, study for exams, or learn how to compute the LSRL by hand. Many instructors encourage using calculators to verify results — just make sure you also understand the underlying concepts and can replicate the steps on your own.

Related Regression Calculators

Discover more specialized regression modeling tools.

Exponential Regression Calculator

Model growth and decay patterns

Quadratic Regression Calculator

Fit parabolic curves to your data

Multiple Regression Calculator

Use two or more predictor variables

Pearson Correlation Calculator

Measure linear association strength

Regression Curve Calculator

Compare multiple model types

Regression Assumptions Checker

Verify your data meets OLS assumptions

Need to solve a different problem? Use our Free regression equation calculator for all your statistical modeling needs.